Universities are still running exams, but the place where exams happen has changed. Walk into a lecture hall, and you’ll still see scanned attendance sheets and handwritten scripts in some places, but behind the scenes, a quieter revolution is underway: assessments that used to chain institutions to paper, printing schedules, and exam halls have moved into software. For administrators juggling multiple campuses, for exam officers racing to stamp results out before the next registration window, and for faculty who need reliable ways to measure learning at scale, online exam management systems have stopped being an experimental add-on. They are now core infrastructure.

The reasons are practical and urgent. Paper-based processes scale poorly: physical logistics carry cost and risk, manual marking creates long waits for students, and human errors, from misfiled scripts to arithmetic mistakes when compiling results, produce avoidable rechecks and appeals. That’s why more universities are looking beyond simple quiz forms and moving to purpose-built platforms that control every stage of the exam lifecycle, from item banking to result posting. International bodies such as UNESCO and regional education initiatives have also spotlighted digital transformation in education as strategic, which has accelerated institutional investment in secure assessment tools.

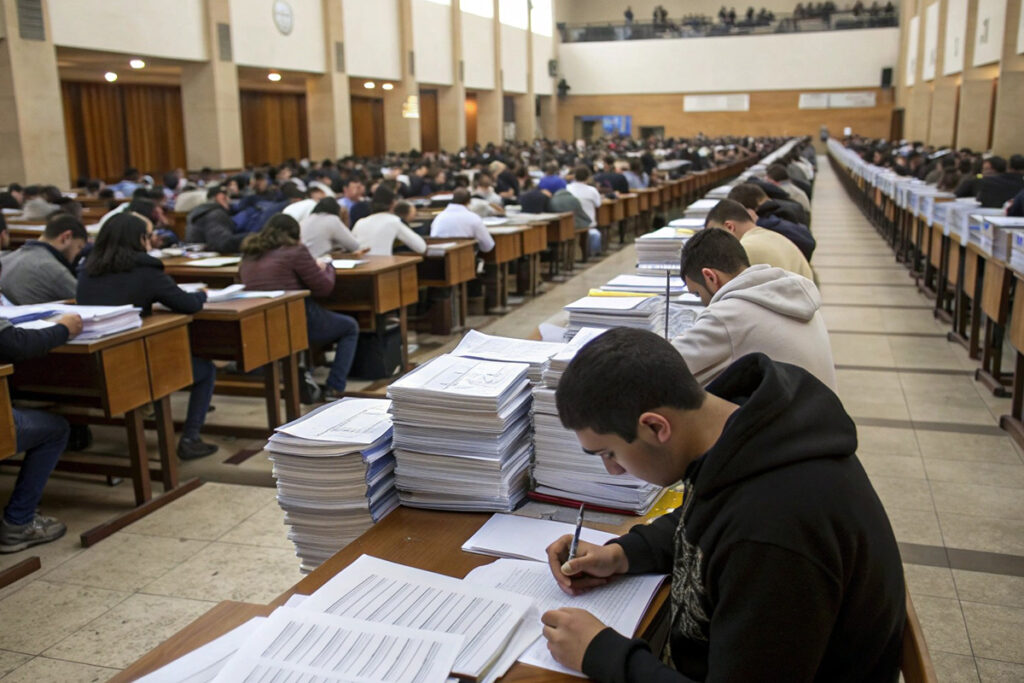

Why paper exams no longer scale

Operational costs balloon when assessments require physical papers, printed timetables, secured storage, sealed transportation, and dedicated invigilation teams. Each of those points adds labour and procurement steps that are repeated every exam cycle. Beyond cost, the reputational risk from leaked papers or compromised test items is real and recurring; institutions spend both money and goodwill on investigations when the integrity of a paper exam is questioned.

There is also a time-cost problem. Manual marking and result compilation are resource-intensive tasks. Many instructors and marking teams report that manual grading consumes “too much” of their time and can delay feedback to students by weeks, a bottleneck that affects course progression and academic administration. Digital assessment workflows shorten that loop by automating objective scoring and streamlining faculty moderation.

Read more: Building Trust in Online Admissions: How Universities Can Reduce Fraud

Finally, scale strains invigilation. In large cohorts, maintaining consistent invigilation standards across rooms and campuses is difficult; variation introduces fairness concerns. Online systems give administrators centralised control and logs, which makes standardising rules and reviewing irregularities easier than trying to reconcile handwritten incident reports from dozens of halls.

What an online exam system should control

A robust online exam management system is not just a quiz engine. At a minimum, it must provide:

- Question bank creation and secure storage. Item versioning, tagging by learning outcome, and controlled import/export workflows help institutions maintain a high-quality, reusable question inventory.

- Exam scheduling and time limits. Built-in scheduling reduces clashes and supports different time zones or cohort windows for multi-campus or distance learners.

- Student authentication before access. Strong identity checks reduce impersonation risks and protect the validity of results.

- Auto-submission on timeout and fail-safe rules. Systems should reliably submit attempts if a candidate’s connection drops or a timer expires.

- Instant result processing for objective tests. Auto-grading of multiple-choice and certain short-answer formats creates fast feedback loops.

- Role-based access and audit trails. Controlled access for exam officers, faculty, markers, and external moderators prevents accidental exposure of items and creates an audit log for compliance.

These are not theoretical features. Leading exam platforms bundle them because they directly address the operational pain points universities face.

Proctoring and cheating control: what works in practice

Academic integrity remains mission-critical, which is why proctoring and monitoring features are a central part of modern exam systems. Effective solutions combine multiple layers:

- Pre-exam ID verification. Candidates upload or capture ID and a face image; some services cross-check public record challenge questions or use biometric checks.

- Browser lockdown. Specialised browsers or secure exam apps prevent tab switching, block copying, and disable extensions that could leak content.

- Camera and screen monitoring. Video and screen recordings can be collected and reviewed, while automated algorithms flag suspicious behaviours for human review.

- Randomised questions and adaptive slices. Shuffling questions and pulling randomised variants from a question bank reduces the value of sharing answers.

Third-party proctoring services and university implementations vary in approach: some use live remote proctors, others use fully automated machine-learning flagging, and some combine both. The important point is that flags are a starting point, not a verdict; faculty review remains essential to distinguish false positives from genuine misconduct.

A word of caution: proctoring tools can raise access and privacy questions. Not every exam needs the same level of surveillance.

How Vigilearn supports online exams (operational snapshot)

Vigilearn’s Exam Portal is positioned as an end-to-end assessment layer inside a central campus platform. In practice, that means:

- Exam setup from a central interface. Scheduling, duration, allowed aids, and question sources are configured in one place, which reduces errors caused by fragmented spreadsheets.

- Student data sync. Candidate lists and course enrolments are synchronised from the LMS or administrative panels, so exam rosters reflect the current academic register.

- Secure links per candidate and role-based issuance. Exam access is controlled by permissions and uniquely issued URLs or credentials for each candidate and invigilator.

- Configurable proctoring rules by exam type. Institutions can apply stricter checks for final exams and lighter settings for formative work.

- Real-time faculty monitoring and incident flagging. Staff can observe sessions live and receive system flags for behaviour that merits review.

- Automatic results integration. Objective scores flow directly into gradebooks and student records, while flagged items await faculty moderation.

If your university already uses Vigilearn for learning management and records, the Exam Portal’s integration reduces manual exports and re-import cycles, which in turn minimises clerical errors and speeds up publishing final grades. See Vigilearn’s product page for a feature tour.

Turning exam data into actionable insights

The most strategic advantage of online exam platforms is data. Rather than just storing scores, a modern exam system reports:

- Question-level difficulty and discrimination indices. Faculty can see which items performed poorly and whether a distractor attracted unintended selections.

- Pass/fail and cohort trend analysis. Program leads spot patterns across terms or sections, detecting where instruction or curriculum needs attention.

- Student progression and longitudinal performance. Dashboards that track competency growth across modules help academic advisors and curriculum committees make evidence-based decisions.

- Program-level outcome tracking. Assessment data ties back to learning outcomes and accreditation evidence.

These analytics turn routine assessment into curriculum intelligence. When poorly-performing items are reworked, and course content is realigned with difficulty trends, institutions close the loop between testing and teaching, and that’s where student learning actually improves.